A CASE VIGNETTE

After hearing the overhead page for emergency anesthesia help, an anesthesia professional rushed to the operating room with an Ear, Nose and Throat (ENT) case in progress. On arrival, she noted an asleep patient turned 90 degrees away from the anesthesia machine with an ENT laryngoscope in place with the following vital signs: 84% on the pulse oximeter and blood pressure 80/53 mmHg. She could hear the ventilator alarming, with “High Peak Inspiratory Pressure” flashing across the top of the screen. The anesthesia professional in the operating room at the time shared how the peak inspiratory pressures crept up quickly and ventilation was becoming difficult in the last several minutes. The patient had a history of asthma and despite bronchodilators and increased anesthetic, bronchospasm persisted. Another anesthesia professional auscultated and reported that there was no wheezing and no audible air movement; meanwhile, another colleague was preparing epinephrine. The anesthesia professional, who responded to the emergency anesthesia page, examined the patient from endotracheal tube to circuit to the machine and looked into the patient’s mouth where she saw the small endotracheal tube kinked out of sight from the anesthesia team. She relieved the bend and the ventilator alarm ceased its high-pitched whine. As the oxygen saturation quickly climbed, her colleagues’ faces showed both appreciation and embarrassment. How could they have missed that simple problem? The other emergency responders noted that they were so focused on assisting their colleague that they didn’t question the working diagnosis of bronchospasm. The in-operating room anesthesia professional noted that the history, timing, and signs led him to believe bronchospasm had to be what was happening. The second anesthesia professional, taking in new information without context, was able to correctly diagnose the problem. Unbeknownst to these anesthesia professionals, they were suffering from the effects of cognitive bias.

After hearing the overhead page for emergency anesthesia help, an anesthesia professional rushed to the operating room with an Ear, Nose and Throat (ENT) case in progress. On arrival, she noted an asleep patient turned 90 degrees away from the anesthesia machine with an ENT laryngoscope in place with the following vital signs: 84% on the pulse oximeter and blood pressure 80/53 mmHg. She could hear the ventilator alarming, with “High Peak Inspiratory Pressure” flashing across the top of the screen. The anesthesia professional in the operating room at the time shared how the peak inspiratory pressures crept up quickly and ventilation was becoming difficult in the last several minutes. The patient had a history of asthma and despite bronchodilators and increased anesthetic, bronchospasm persisted. Another anesthesia professional auscultated and reported that there was no wheezing and no audible air movement; meanwhile, another colleague was preparing epinephrine. The anesthesia professional, who responded to the emergency anesthesia page, examined the patient from endotracheal tube to circuit to the machine and looked into the patient’s mouth where she saw the small endotracheal tube kinked out of sight from the anesthesia team. She relieved the bend and the ventilator alarm ceased its high-pitched whine. As the oxygen saturation quickly climbed, her colleagues’ faces showed both appreciation and embarrassment. How could they have missed that simple problem? The other emergency responders noted that they were so focused on assisting their colleague that they didn’t question the working diagnosis of bronchospasm. The in-operating room anesthesia professional noted that the history, timing, and signs led him to believe bronchospasm had to be what was happening. The second anesthesia professional, taking in new information without context, was able to correctly diagnose the problem. Unbeknownst to these anesthesia professionals, they were suffering from the effects of cognitive bias.

BACKGROUND

Cognitive biases affect clinicians by allowing a practitioner to create their own subjective reality, which may alter their own perception of a data point. This “systematic pattern of deviation from an established norm or rationality in judgment” may lead to alteration in one’s practices, affecting one’s behavior.8 It is important to note that psychological deviation as a result of cognitive bias affects all humans—not just medical professional—and can cause errors in personalized medical care on an individual basis, or in public health policies, affecting whole populations.9

The effects of cognitive bias on errors in medicine have long been understood to affect patient safety.10,11 Cognitive bias can cause significant impacts on decision-making for clinicians, including anesthesia professionals, potentially jeopardizing the lives of patients.11,12 By first understanding cognitive biases and how they affect our practice, we may mitigate their effect and improve patient safety.

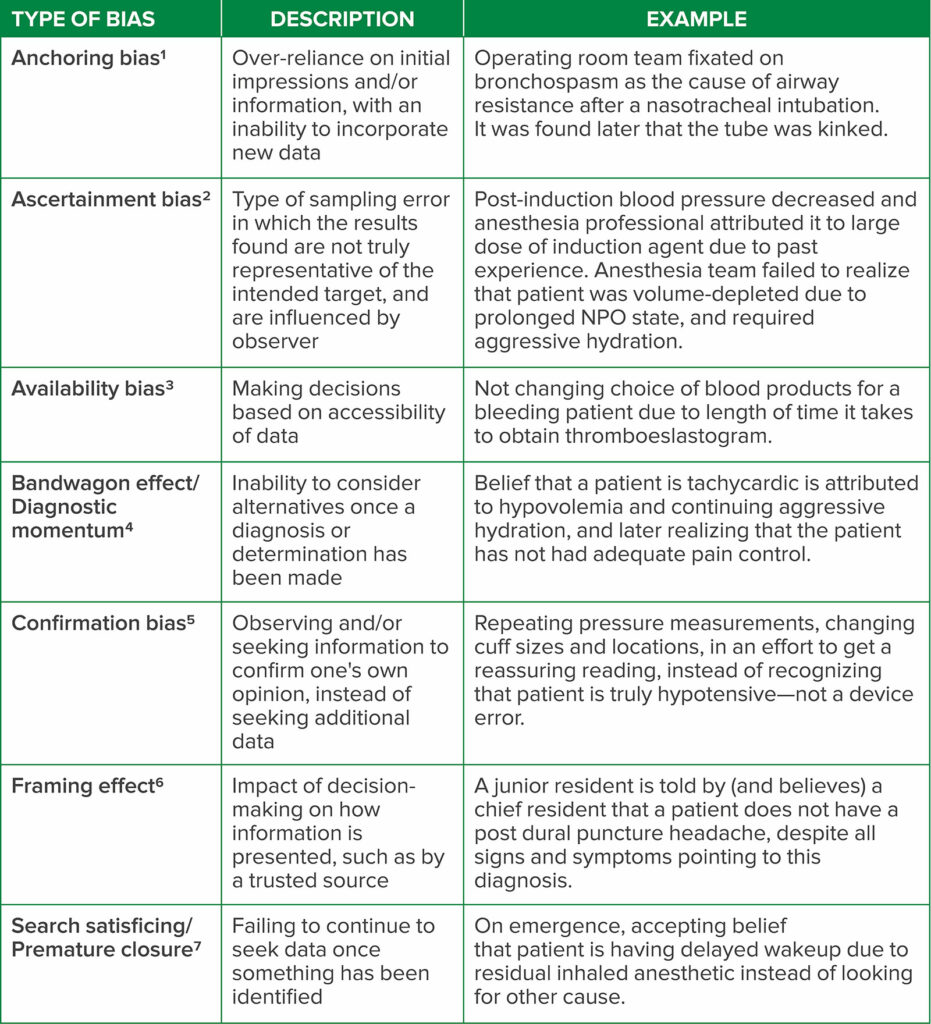

In the case presented, several cognitive biases were at play, including availability bias and bandwagon effect. Availability bias describes a psychological phenomenon in which decisions are made based on the data at hand, without seeking additional data.13 The bandwagon effect, also known as diagnostic momentum, refers to an inability to consider alternatives once a diagnosis or determination has been made.14 There are a variety of frequently observed biases that may afflict anesthesia professional (Table 1).12,15

Table 1: A sampling of cognitive bias that may occur in anesthesiology and the practice of perioperative medicine, including descriptions and examples of each type.

THE EFFECTS OF COGNITIVE BIAS ON ERRORS

Errors that occur in the perioperative period often result from cognitive bias, with studies citing that as many as 32.7% of all postoperative complications are affected at least in part by bias.16 Specific types of cognitive bias have been identified as factors contributing to errors in anesthetic care. Confirmation bias, for example, is the act of observing or seeking information to confirm one’s own opinion, instead of seeking additional information that may challenge one’s current belief. In a study of a series of esophageal intubations that resulted in catastrophic outcomes for patients,17 signs such as observation of thoracic movement, auscultation of the chest, fogging in the endotracheal tube and perception of the tube passing vocal cords were used to “confirm” a practitioner’s belief that successful intubation was achieved, instead of seeking the definitive capnography tracing to confirm tube placement.18

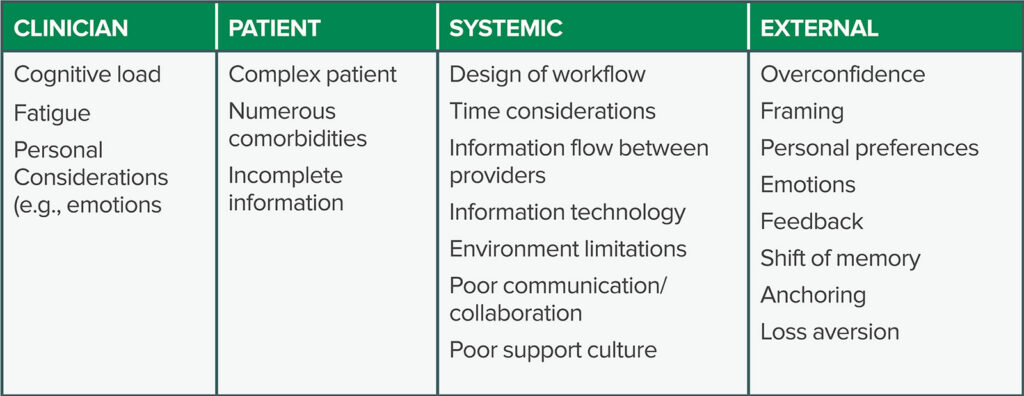

Different factors contribute to cognitive bias in health care professionals. These factors may be generally categorized into those affecting the health care professional, the patient, and systemic or external factors (Table 2). For example, factors such as cognitive overload, fatigue, and sleep deprivation have been shown to have a deleterious effect on health care professionals, increasing the risk of cognitive bias leading to errors and lapses in patient safety.19 Furthermore, a variety of irrational factors influence clinical decision-making in anesthesiology, including framing, personal preferences, emotions, feedback, and loss aversion.20

Table 2: Factors that may cause cognitive bias in anesthesiology, including those directly attributed to the patient, clinician, or systemic design. These are all potentially affected by external factors such as overconfidence and loss aversion.

REDUCING COGNITIVE BIAS

It is important to reduce diagnostic error attributed to cognitive bias when possible. There are several main categories of effective cognitive interventions: 1) improvement of knowledge and experience via tools such as simulation, feedback, and education, 2) improvement of reasoning and decision-making skills using tools such as reflective practice and metacognitive review, and 3) improvement of assistance in decision-making with aids such as electronic health records and integrated decision support.21

It is likely that the most important approach to reducing cognitive bias is promotion of awareness of such confounding factors by medical personnel. Awareness by anesthesia professionals may be achieved by using learning material, scholarly publications, didactics, and simulation.22 Fixation errors, for example, are a type of error in which focus is placed on one aspect of a situation, while ignoring other, more relevant information.22 These errors may be caused by anchoring bias, and may be avoided via awareness of such potential errors leading to strategies such as ruling out the worst case scenario, understanding that first assumptions may be wrong, consideration of artifacts as the last explanation of a problem, and avoiding use of a prior conclusion with current team members.22 Nonetheless, awareness alone is not sufficient to combat bias. Past literature has described a “bias blind spot,” a phenomenon in which a person experiences a false sense of invulnerability from bias, which is more common in providers with greater cognitive sophistication.20

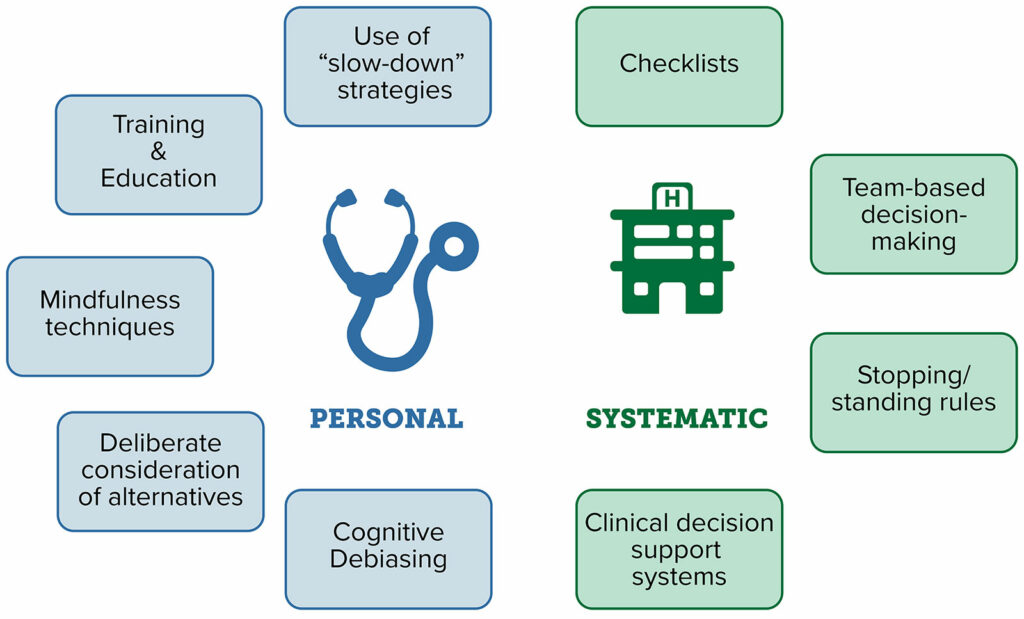

Strategies that may be employed to reduce cognitive bias can often be categorized into interventions that affect a clinician on a personal basis versus those that are implemented on a systematic or system-wide basis (Figure 1). Individual-level strategies include training and education, mindfulness techniques, and deliberate consideration of alternatives.23 Systematic strategies include use of checklists, team-based decision-making, and clinical decision support systems, such as integrated prompts in electronic health records.23 Decision-making checklists, modeled after those used in the aviation industry, reduce the risk of adverse events in the operating room.22 In a simulated setting, checklists were shown to result in a 6-fold reduction in failure to adhere to critical steps in managing a crisis, even while adjusting for learning or fatigue effects.23

Figure 1: Strategies that may enable medical professionals to combat bias, via prevention, recognition, and active interventions to mitigate their effect in a real-time basis.

Unfortunately, there are limitations with all of these strategies, namely the lack of objective evidence to support several of these methods. Stopping and standing rules, which are constructs designed to determine when information-gathering can stop, have no published evidence to support their utilization. Similarly, the use of “must-not-miss alternatives,” where one considers diagnoses that must be considered before making a final diagnosis, are also not supported by published evidence. In addition, there seems to be a separation between the efficacy of such strategies to improve diagnostic acumen and treatment or patient outcomes. For example, despite the implementation of clinical decision support systems, such as those to increase adherence to best practices and reduce medication errors, there is little evidence that they improve clinical diagnosis.23 This may be due to limited study of their effect on patient outcomes, as many studies of clinical decision support systems focus specifically on metrics to assess if new interventions achieve a desired endpoint, such as prompting the order of a laboratory test or imaging study rather than impact on clinical diagnosis.24

COMBATING COGNITIVE BIAS IN ANESTHESIOLOGY

We advocate for a two-step approach to recognizing and combating cognitive bias on a daily basis in the practice of anesthesiology. The first step is education and awareness. It is critically important that anesthesia professionals understand that these biases exist and that they can affect patient care. It’s critical to remember that bias often affects medical practitioners in detection of changes in patients, diagnosis of clinical conditions, and treatment of pathologies. Although awareness alone is not enough to combat bias, it is a critical first step to addressing the issue and developing strategies to be cognizant of its impact on patient care and safety.

Next, it is important to combat bias both on a personal level and system-wide, which will often require customized interventions. Solutions are not universal, and must be individualized to different institutions, teams, and situations. For example, the bandwagon effect may be combatted successfully in one institution by intraoperative consultation with one’s colleagues. Conversely, in a smaller institution, with limited personnel, diagnostic momentum may be more successfully avoided by using checklists or cognitive aids in collaboration with other perioperative providers. On a departmental and institutional level, the role that cognitive bias may have played in an adverse event should be considered in each adverse event review. Anesthesia groups should consider the use of simulation for both trainees and practicing clinicians to create educational scenarios to demonstrate cognitive bias in action, and strategies to combat it. Simulation has been shown to be particularly useful in modeling team-based situational awareness and facilitating interdisciplinary communication—both important tools to combating cognitive bias, especially in challenging situations.25 Although there is not one universal approach that avoids cognitive bias in the practice of perioperative medicine, a combination of vigilance and thoughtful interventions offers a significant opportunity to improve quality of anesthesia services and patient safety.

Anesthesia professionals are susceptible to cognitive biases which can negatively impact patient care and contribute to medical errors. Anesthesiology necessitates a great deal of preparation for emergencies, which tend to occur infrequently, but quickly. It is important not to neglect the mental and systematic preparation required to avoid cognitive bias. Anesthesia professionals should receive training in recognizing and combating cognitive biases. Strategies to combat cognitive biases should be implemented at both individual and institutional levels to improve patient safety.

George Tewfik, MD, MBA, FASA, CPE, MSBA, is an associate professor of anesthesiology at Rutgers New Jersey Medical School in Newark, NJ.

Stephen Rivoli, DO, MPH, MA, CPHQ, CPPS, is an assistant professor of anesthesiology at NYU Grossman School of Medicine in New York, NY.

Monica W. Harbell, MD, FASA, is an assistant professor of anesthesiology at the Mayo Clinic in Phoenix, AZ.

The authors have no conflicts of interest.

References

- Tversky A, Kahneman D. Judgment under uncertainty: heuristics and biases. Science. 1974 Sep 27;185(4157):1124-31. doi: 10.1126/science.185.4157.1124. PMID: 17835457

- Catalogue of Biases Collaboration, Spencer EA, Brassey J. Ascertainment bias. In: Catalogue Of Bias 2017: https://catalogofbias.org/biases/ascertainment-bias/. Accessed December 14, 2022.

- Fares WH. The ‘availability’ bias: underappreciated but with major potential implications. Crit Care. 2014 Mar 12;18(2):118. doi: 10.1186/cc13763. PMID: 25029621

- O’Connor N, Clark S. Beware bandwagons! The bandwagon phenomenon in medicine, psychiatry and management. Australas Psychiatry. 2019 Dec;27(6):603-606. doi: 10.1177/1039856219848829. Epub 2019 Jun 5. PMID: 31165616

- Mendel R, Traut-Mattausch E, Jonas E, et al. Confirmation bias: why psychiatrists stick to wrong preliminary diagnoses. Psychol Med. 2011 Dec;41(12):2651-9. doi: 10.1017/S0033291711000808. Epub 2011 May 20. PMID: 21733217

- Gong J, Zhang Y, Yang Z, et al. The framing effect in medical decision-making: a review of the literature. Psychol Health Med. 2013;18(6):645-53. Epub 2013 Feb 6. PMID: 23387993

- Croskerry P. Achieving quality in clinical decision making: cognitive strategies and detection of bias. Acad Emerg Med. 2002 Nov;9(11):1184-204. PMID: 12414468

- Landucci F, Lamperti M. A pandemic of cognitive bias. Intensive Care Med. 2021;47:636–637. PMID: 33108517

- Lechanoine F, Gangi K. COVID-19: pandemic of cognitive biases impacting human behaviors and decision-making of public health policies. Front Public Health. 2020;8:613290. PMID: 33330346

- Croskerry P. The importance of cognitive errors in diagnosis and strategies to minimize them. Acad Med. 2003;78:775–80. PMID: 12915363

- Saposnik G, Redelmeier D, Ruff CC, Tobler PN. Cognitive biases associated with medical decisions: a systematic review. BMC Med Inform Decis Mak. 2016;16:138. PMID: 27809908

- Beldhuis IE, Marapin RS, Jiang YY, et al. Cognitive biases, environmental, patient and personal factors associated with critical care decision making: a scoping review. J Crit Care. 2021;64:144–153. PMID: 33906103

- Fares WH. The ‘availability’ bias: underappreciated but with major potential implications. Crit Care. 2014;18:118. PMID: 25029621

- Whelehan DF, Conlon KC, Ridgway PF. Medicine and heuristics: cognitive biases and medical decision-making. Ir J Med Sci. 2020;189:1477–1484. PMID: 32409947

- Stiegler MP, Neelankavil JP, Canales C, Dhillon A. Cognitive errors detected in anaesthesiology: a literature review and pilot study. Br J Anaesth. 2012;108:229–235. PMID: 22157846

- Antonacci AC, Dechario SP, Antonacci C, et al. Cognitive bias impact on management of postoperative complications, medical error, and standard of care. J Surg Res. 2021;258:47–53. PMID: 32987224

- Jafferji D, Morris R, Levy N. Reducing the risk of confirmation bias in unrecognised oesophageal intubation. Br J Anaesth. 2019; 22:e66-e8. PMID: 30857612

- Dale W, Hemmerich J, Moliski E, Schwarze ML, Tung A. Effect of specialty and recent experience on perioperative decision-making for abdominal aortic aneurysm repair. J Am Geriatr. Soc 2012;60:1889–1894. PMID: 23016733

- Croskerry P, Singhal G, Mamede S. Cognitive debiasing 1: origins of bias and theory of debiasing. BMJ Qual Saf. 2013;22 Suppl 2(Suppl 2):ii58–ii64. PMID: 23882089

- Stiegler MP, Tung A. Cognitive processes in anesthesiology decision making. Anesthesiology. 2014;120:204–217. PMID: 24212195

- Graber ML, Kissam S, Payne VL, et al. Cognitive interventions to reduce diagnostic error: a narrative review. BMJ Qual Saf. 2012;21:535–557. PMID: 22543420

- Ortega R, Nasrullah K. On reducing fixation errors. APSF Newsletter. 2019;33:102–103.

- Webster CS, Taylor S, Weller JM. Cognitive biases in diagnosis and decision making during anaesthesia and intensive care. BJA Educ. 2021;21:420–425. PMID: 34707887

- Kawamoto K, Houlihan CA, Balas EA, Lobach DF. Improving clinical practice using clinical decision support systems: a systematic review of trials to identify features critical to success. BMJ. 2005;330:765–768. PMID: 15767266

- Rosenman ED, Dixon AJ, Webb JM, et al. A simulation-based approach to measuring team situational awareness in emergency medicine: a multicenter, observational study. Acad Emerg Med. 2018;25:196–204. PMID: 28715105

Issue PDF

Issue PDF PDF

PDF